An interesting conversation over coffee with a client today gave me something to mull over a little. The question brought to the table was how some assessors, while engaged in audit, brought up other services they offer like ASV, penetration testing and vulnerability scan and how this may look like a conflict of interest issue.

I will start first by proclaiming that we aren’t QSAs. We do have a myriad of certifications such as ISO and other personal certs in information security, but this article isn’t about our resume. It’s the ever important question of the role of the QSA and whether they should be providing advisory services.

Why we choose not to go the route of QSAs is for another article, but suffice to say, in the same regard we work with CBs for ISO projects, we employ the same business model for PCI or any other certification projects. We rabidly believe in the clear demarcation of those doing the audit and those doing the implementation and advisory. After all, we are in the DNAs of statutory auditors and every single customers or potential customers we have require a specific conflict check, in order to ensure independence and not provide consulting work that may jeopardize our opinions when it comes to audit. Does anyone recall Enron? Worldcom? Waste Management? Goodbye, 90 year old accounting firm.

We have worked with many QSAs in almost 14 years of doing PCI-DSS – and here, QSAs I mean by individuals as well as QSA-Cs (QSA Companies). Our group here is collectively made up of senior practitioners in information security and compliance, so we don’t have fresh graduates or juniors going about advising 20 years plus C level veterans on how to run their networks or business.

A QSA (Qualified Security Assessor) company in a nutshell is a company that is qualified by the PCI Security Standards Council (PCI SSC) to perform assessments of organization against the PCI standards. Take note of the word: QUALIFIED. This becomes important because there is a very strict re-qualification program from the PCI-SSC to ensure that the quality of QSAs are maintained. Essentially, QSAs are vouched by the PCI SSC to carry out assessment tasks. Are all QSAs created equal? Probaby not as based on our experience some are probably better than others in specific aspects of PCI-DSS. Even the PCI SSC has a special group of QSAs under their Global Executive Assessor Roundtable (GEAR), which we will touch on later.

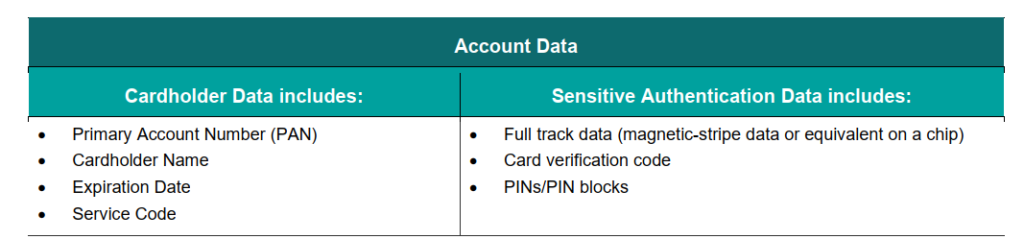

The primary function of a QSA company is to evaluate and verify an organisation’s adherence to the PCI DSS requirements. This involves a thorough examination of the organisation’s cardholder data environment (CDE) — including its security systems, network architecture, access controls, and policies — to ensure that they meet the PCI requirements.

Following the assessment, the QSA company will then prepare a Report on Compliance (RoC) and an Attestation of Compliance (AoC), which are formal documents that certify the organization’s compliance status. Please don’t get me started on the dang certificate because I will lose another year of my life with high blood pressure. These OFFICIAL documents are critical for the organization to demonstrate the company’s commitment to security to partners, customers, and regulatory bodies. The certificate, however, can be framed to be hanged on the wall of your toilet, where it rightfully belongs. Right next to the toilet paper, which has probably a slightly higher value.

Anyway, QSAs have very specific roles defined by the SSC:

– Validating and confirming Cardholder Data Environment (CDE) scope as defined by the assessed entity.

QSA PROGRAM GUIDE 2023

– Selecting employees, facilities, systems, and system components accurately representing the assessed environment if sampling is employed.

– Being present onsite at the assessed entity for the duration of each PCI DSS Assessment or perform remote assessment activities in accordance with applicable PCI SSC assessment guidance.

– Evaluating compensating controls, as applicable.

– Identifying and documenting items noted for improvement, as applicable.

– Evaluating customized controls and deriving testing procedures to test those controls, as applicable.

– Providing an opinion about whether the assessed entity meets PCI DSS Requirements.

– Effectively using the PCI DSS ROC Template to produce Reports on Compliance.

– Validating and attesting as to an entity’s PCI DSS compliance status.

– Maintaining documents, workpapers, and interview notes that were collected during the PCI DSS Assessment and used to validate the findings.

– Applying and maintaining independent judgement in all PCI DSS Assessment decisions.

– Conducting follow-up assessments, as needed

You can see above, there is no advisory, recommendation, consultation, implementation work listed. It’s purely assessment and audit. What we do see are more often than not, QSAs do offer other services under separate entities. This isn’t disallowed specifically, but the SSC does recommend a healthy dose of independence:

The QSA Company must have separation of duties controls in place to ensure Assessor Employees conducting or assisting with PCI SSC Assessments are independent and not subject to any conflict of interest.

QSA Qualification requirements 2023

Its hard to adjudge this point, but the one providing the audit shouldn’t be the one providing the consultation and advisory services. Some companies get around this by having a separate arm providing special consultation. Which is where we step in, as without doing any gymnastics in organizational reference, we make a clear demarcation of who does the audit and who does the consultation and advisory role.

The next time you receive any proposal, be sure to ask the pertinent question: are they also providing support and advisory? Because a good part of the project is in that, not so much of the audit. We have actually seen cases where the engaged assessor flat out refused to provide any consultative or advisory or templates or anything to assist the customer due to conflict of interest, leaving the client hanging high and dry unless they engage another consultative project with them separately. Is that the assessor’s fault? In theory, the assessor is simply abiding with the requirements for independence. On the other hand, these things should have been mentioned before the engagement, that a bulk of the PCI project would be in the remediation part and definitely guidance and consultation would be needed! It might reek of being a little disingenuous. It’s frustrating for us when we get pulled in halfway through a project and we ask, well why haven’t you query your engaged QSA on this question? Well, because they want another sum of money for their consultative works, or they keep upselling us services that we are not sure we need unless we get their advisory in. What do you think their advisory is going to say? You can see whereas on paper, it might be easy to state that independence has been established, in reality, it’s often difficult to distinguish where the audit, recommendations, advisory and services all start or end as sometimes it’s all mashed. Like potatoes.

Here’s the another official reference to this issue in FAQ #1562 (shortened)

If a QSA Employee(s) recommends, designs, develops, provides, or implements controls for an entity, it is a conflict of interest for the same QSA Employee(s) to assess that control(s) or the requirement(s) impacted by the control(s).

FAQ #1562

Another QSA Employee of the same QSA Company (or subcontracted QSA) – not involved in designing, developing, or implementing the controls – may assess the effectiveness of the control(s) and/or the requirement(s) impacted by the control(s). The QSA Company must ensure adequate, documented, and defendable separation of duties is in place within its organization to prevent independence conflicts.

Again, this is fairly clear that QSAs providing both assessment and advisory/implementation services are not incorrect in doing so, but need to ensure that proper safeguards are in place, presumably to be checked thoroughly by their requalification requirements, under section 2.2 “Independence” of the QSA requalification document. To save you time on reading, there isn’t much prescriptive way to ensure this independence, so we’re left to how the company decides on their conflict of interest policies. Our service is to ensure with confidence that the advice you receive is indeed independent and as much as we know, to benefit the customer, not the assessor. We don’t have skin in their services.

In summary, QSAs can theoretically provide services but it should come separately from the audit, so ensure you get the right understanding before starting off your PCI journey. Furthermore and more concerningly, we’ve seen QSAs refused to validate the scope provided to them, citing that this constitute ‘consulting and advisory’ and needs additional payment. This is literally the first task a QSA does in their list of responsibility, so call them out on it or call us in and let us deal with them. These charlatans shouldn’t even be QSAs in the first place if this is what they are saying.

And finally, speaking on QSAs that are worth their salt – the primary one we often work with Controlcase has been included in the PCI SSC Global Executive Assessor Roundtable 2024 (GEAR 2024).

https://www.pcisecuritystandards.org/about_us/global_executive_assessor_roundtable/

These are nominated as an Executive Committee level advisory board comprising senior executives from PCI SSC assessor companies, that serves as a direct channel for communication between the senior leadership of payment security assessors and PCI SSC senior leadership.

In other words, if you want to know who the SSC looks to for PCI input, these are the guys. Personally, especially for complex level 1 certification, this would be the first group of QSAs I would start considering before approaching others, as these are nominated based on reputation, endeavor and commitment to the security standards — not companies that cough out money to sponsor events or conferences, or look prominent in their dazzling booths, give free gifts but is ultimately unable to deliver their projects properly to their clients.

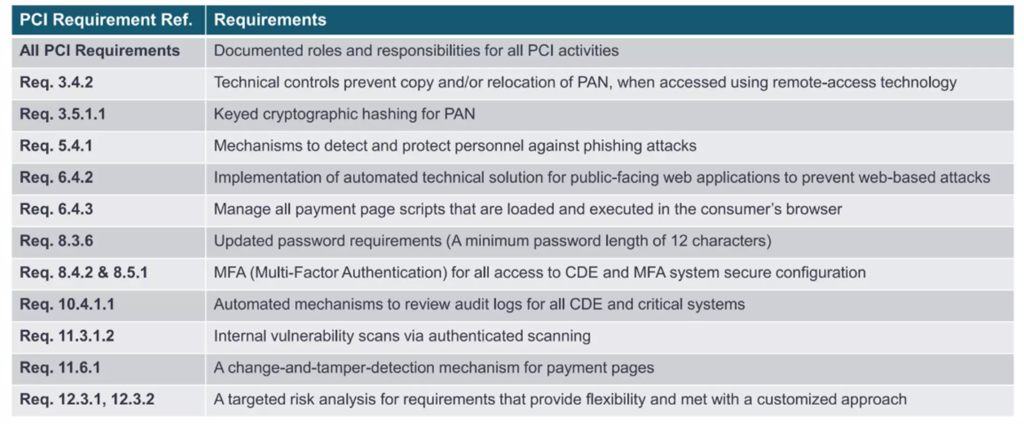

Let us know via email to pcidss@pkfmalaysia.com if you have any queries on PCI-DSS, especially the new version 4.0 or any other compliances such as ISO27001, NIST, RMIT etc!